Portfolio » Case Study: American Religious Sounds

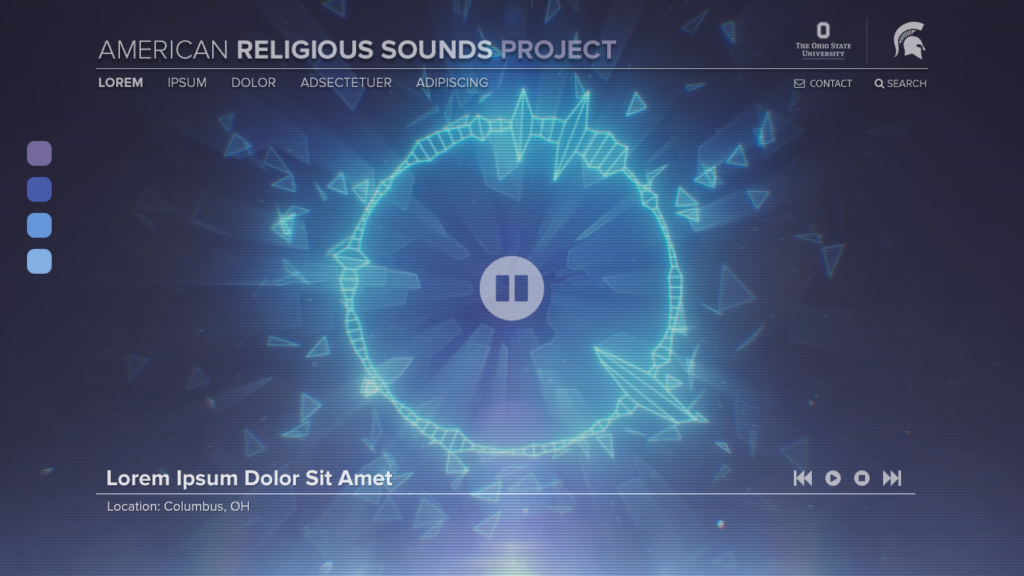

American

Religious Sounds

Client:

The Ohio State University

Role:

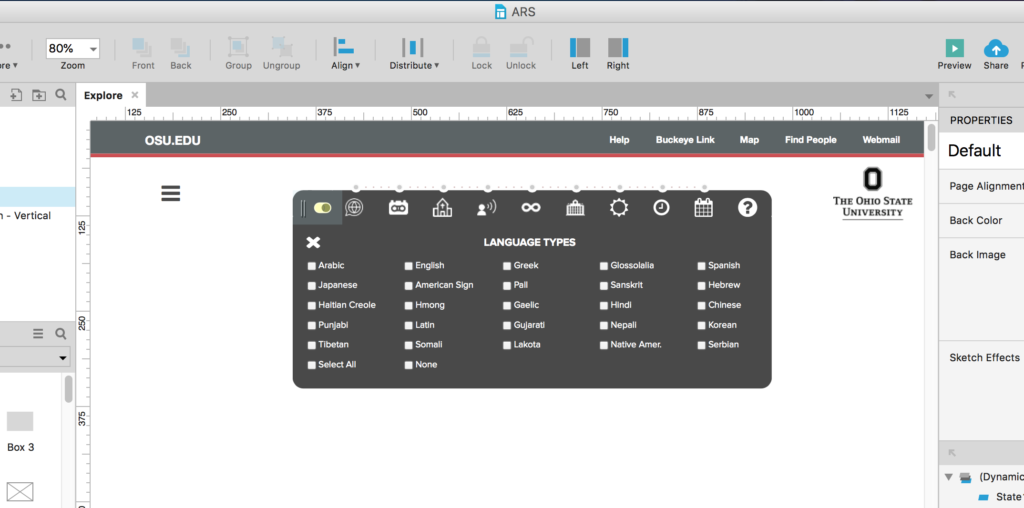

Creative Lead, UX Design, Motion Graphics

Project:

Web Site

Tech:

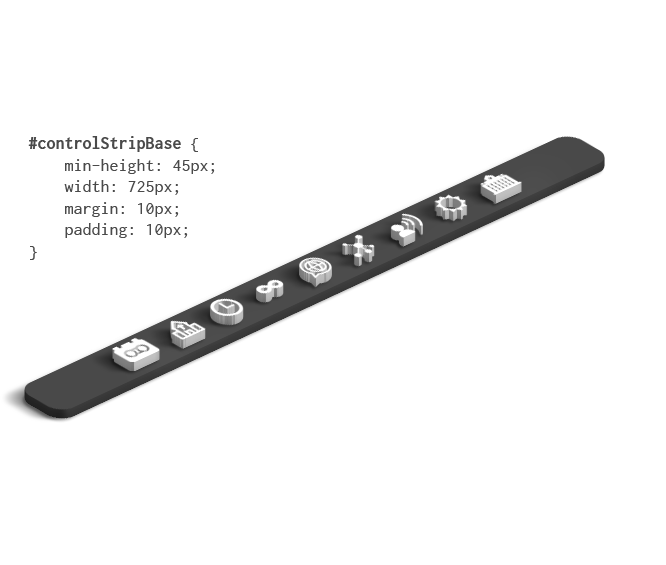

Sketch, Adobe Creative Cloud, Axure RP